ot(X_test, Y2, color="green", label="max_depth=7", linewidth=2)Īfter running the above code we get the following output in which we can see that the decision tree regressor is plotted. ot(X_test, Y1, color="blue", label="max_depth=4", linewidth=2) Plot.scatter(X, y, s=20, edgecolor="black", c="pink", label="data") Regression_2 = DecisionTreeRegressor(max_depth=5) Regression_1 = DecisionTreeRegressor(max_depth=2)

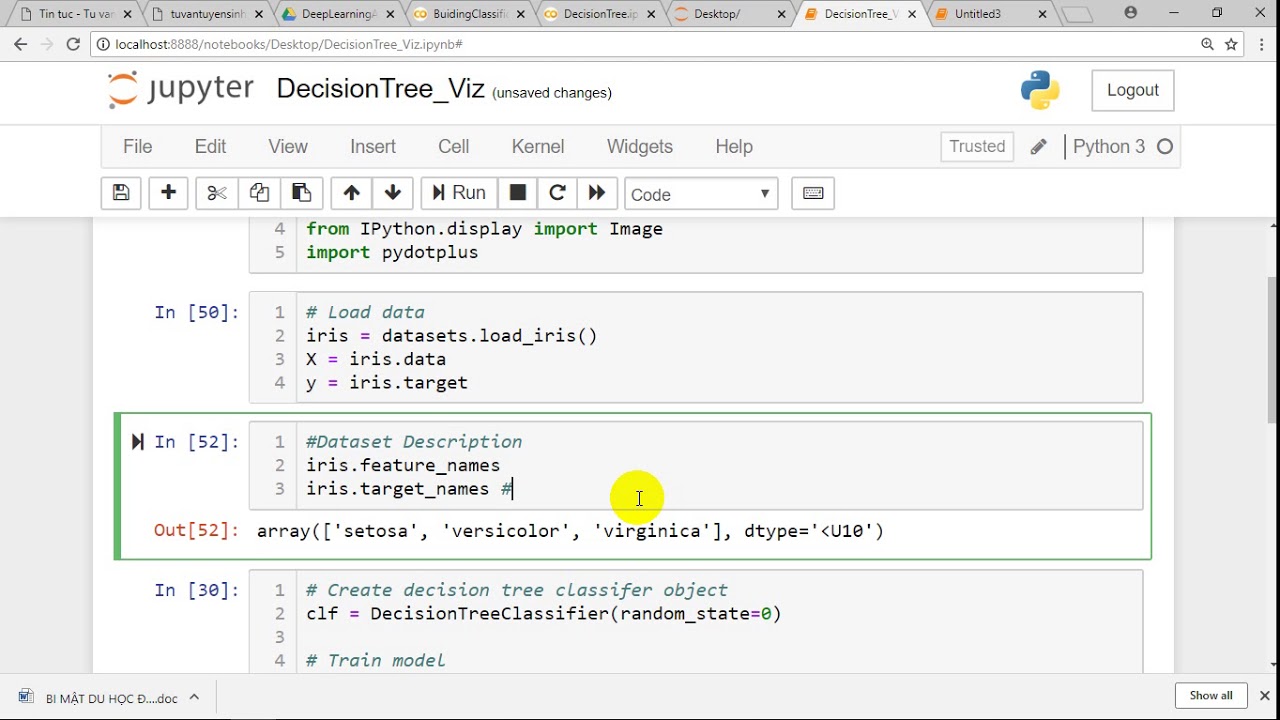

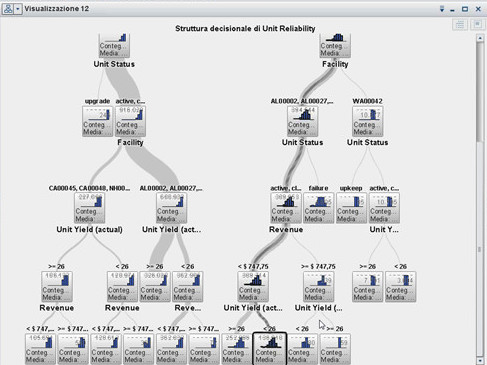

In the following code, we will import some library import numy as np, from ee from import DecisionTreeRegressor and import matplotlib.pyplot as plot. Regressor helps us to understand how the value of the dependent variable is changing corresponding to an independent variable.Here dependent variables work as responses and the independent variable works as features. In regressor, we have dependent and independent variables.In Regressor we just predict the values or we can say that it is a modeling technique that investigates the relationship between dependent and independent variables.Read Scikit learn accuracy_score Scikit learn decision tree regressorīefore moving forward we should have a piece of knowledge about regressors. Scikit learn decision tree classifier example graphviz.Source(dotdata) is used to get data from the source.ĭotdata = tree.export_graphviz(clf, out_file=None)ĭotdata = tree.export_graphviz(clf, out_file=None,Īfter running the above code we get the following output in which we can see that a decision tree graph is drawn with the help of Graphviz.tree.export_graphviz() is used to add some variables inside the tree graph.tree.export_graphviz(clf, out_file=None) is used to create a tree graph on the screen.Graphviz is defined as an open-source module that is used to create graphs. In the following example, we will import graphviz library. As we know decision tree is used for predicting the value and it is non-parametric supervised learning.

#Visualize decision tree python how to#

In this section, we will learn about How to make a scikit learn decision tree example in Python.

Read: Scikit-learn Vs Tensorflow Scikit learn decision tree classifier example ot_tree(clasifier) is used to plot the decision tree on the screen.Ĭlasifier = tree.DecisionTreeClassifier()Īfter running the above code we get the following output in which we can see that the decision tree is plotted on the screen.tree.DecisionTreeClassifier() is used to fit the data inside the tree.tree.DecisionTreeClassifier() is used for making the decision tree classifier.X, Y = iris.data, iris.target is used for train data and test data.load_iris() is used to load the dataset.In the following code, we will load the iris data from the sklearn library and also import the tree from sklearn. A decision tree classifier support binary classification as well as multiclass classification.An array X is holding the training samples and array Y is holding the training sample. The decision tree classifiers take input of two arrays such as array X and array Y.A decision tree classifier is a class that can use for performing the multiple class classification on the dataset.A decision tree is used for predicting the value and it is a nonparametric supervised learning method used for classification and regression.In this section, we will learn about how to create a scikit learn decision tree classifier in python. The decision tree is non parametric method which does not depend upon the probability distribution.Īlso, check: Scikit-learn logistic regression Scikit learn decision tree classifier.The time complexity of the decision tree is a method of the number of records and the number of attributes in the given data.Selects the splits which result in the most homogenous sub-nodes.The decision tree splits the nodes on all the available variables.As we see in the above picture the node is split into sub-nodes.We can also select the best split point in the decision tree.